Algorithms from Scratch: AI/ML for Robotics

This page contains a collection of repos according to a general theme: implementing + deriving AI/ML algorithms from scratch (i.e. no high-level ML libraries, then applying them to some real problems.

Since these are all in Python, that specifically means using nothing higher-level than numerical computing libraries (NumPy, Pandas) and plotting libraries (Matplotlib, Seaborn). Some works do also include reference implementations using sklearn, pytorch, etc for comparison purposes.

Algorithms & topics are:

- Particle Filter for Robot Localization

- Heirarchical Planning & Control (Online A* + PID)

- Support Vector Machines (SVM) for Landmark Prediction on a Mobile Robot

- Deep Neural Networks from Scratch (applied to predict some basic nonlinear functions…nothing robotics-y here, but close enough)

Particle Filter for Robot Localization

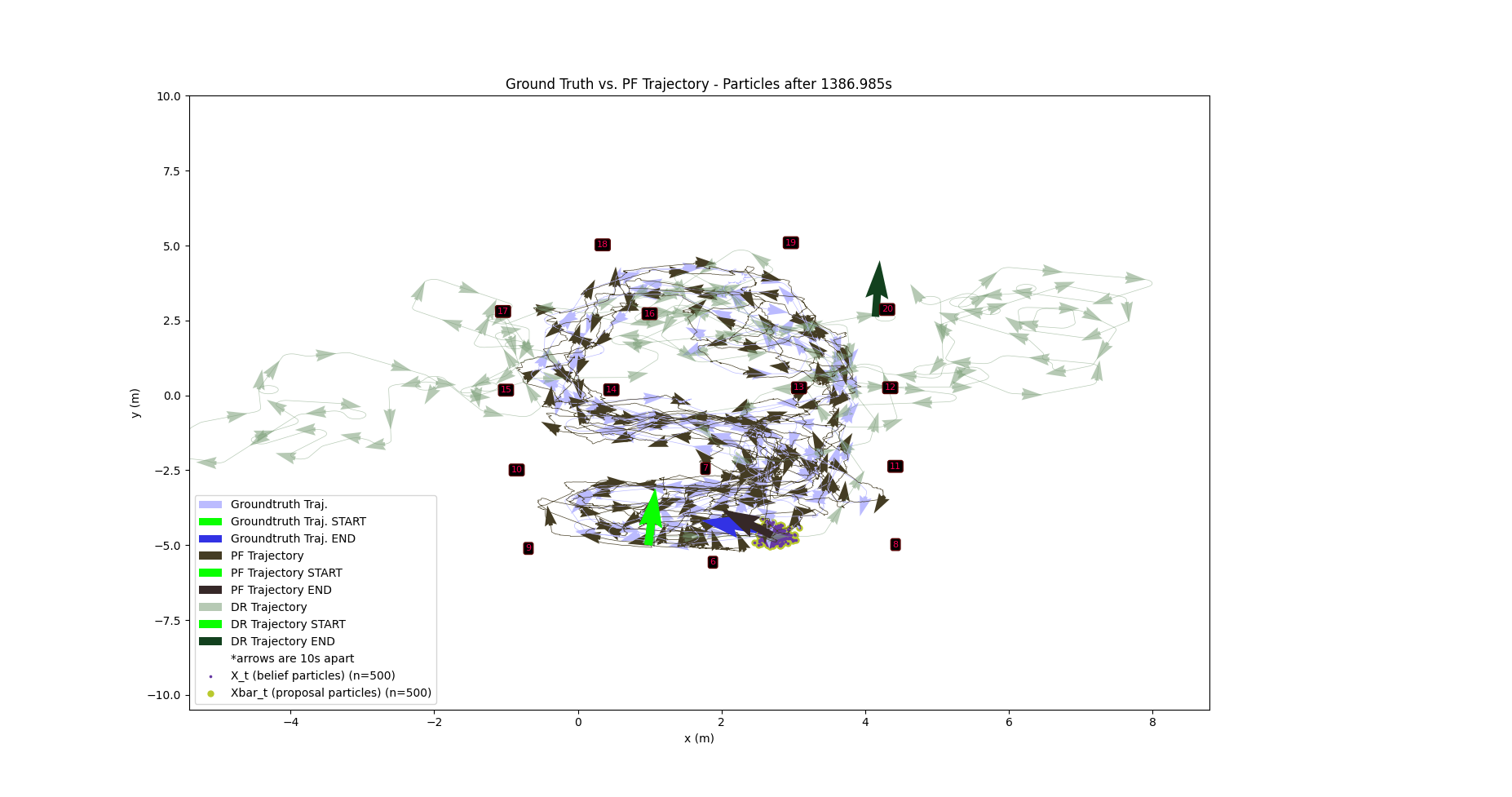

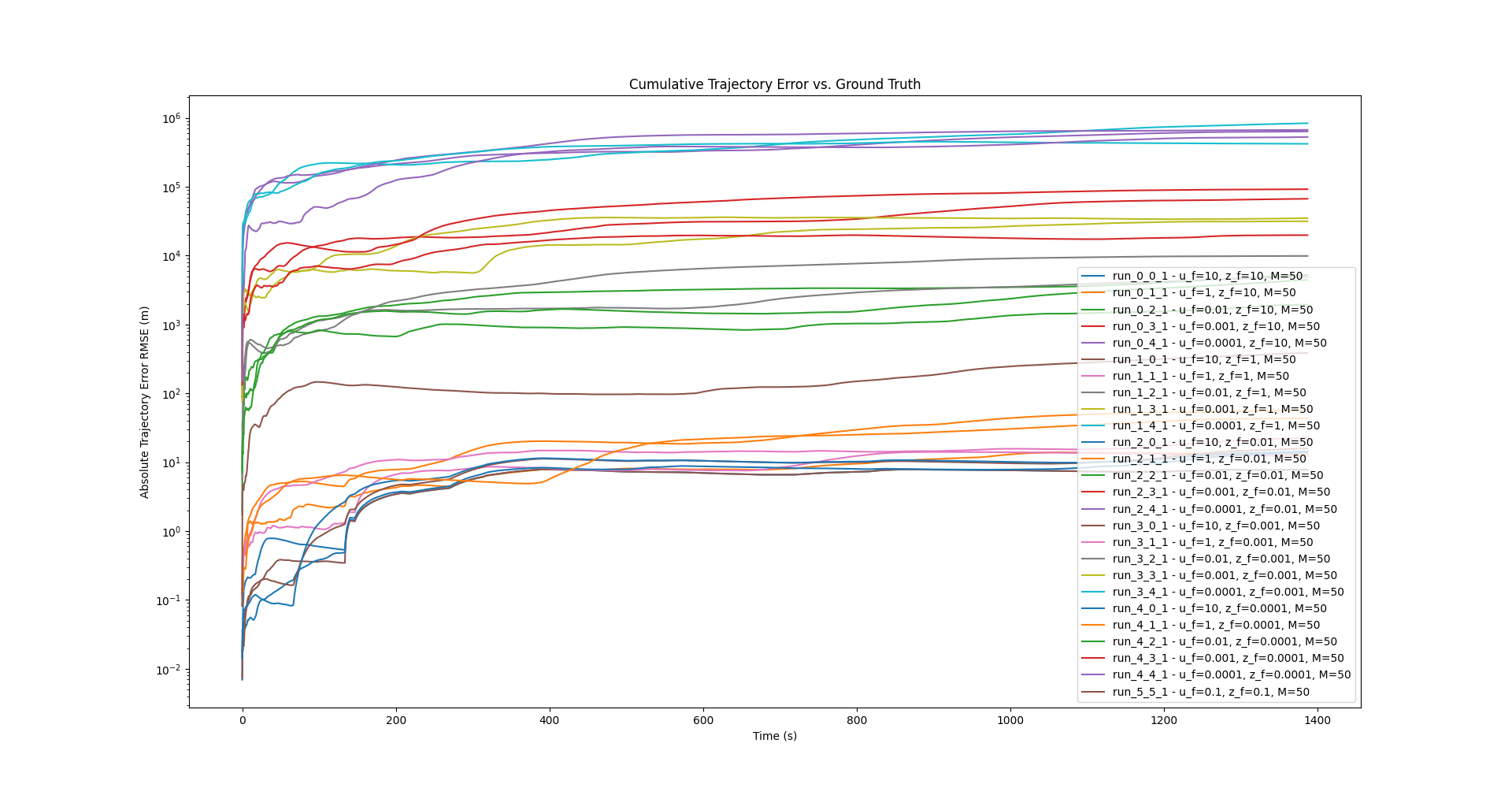

Implemented a particle filter from scratch for mobile robot localization, applied to real-world wheeled robot data from the UTIAS Multi-Robot Cooperative Localization and Mapping Dataset.

The filter estimates the robot’s 2D position and heading over time by combining:

- A motion model based on differential-drive kinematics

- A measurement model that computes expected range and bearing to known landmarks given LiDAR-based heading data, weighted via Gaussian likelihood

- Low-variance resampling for particle set updates (Probabilistic Robotics §4.3)

Key Results: With tuned noise parameters, the particle filter tracked the ground truth trajectory closely while dead reckoning diverged rapidly due to compounding control uncertainty. Control noise had the largest impact on accuracy, especially during periods of missing landmark measurements when the filter only had odometry to rely on.

Plots

Heirarchical Planning & Control (Online A* + PID)

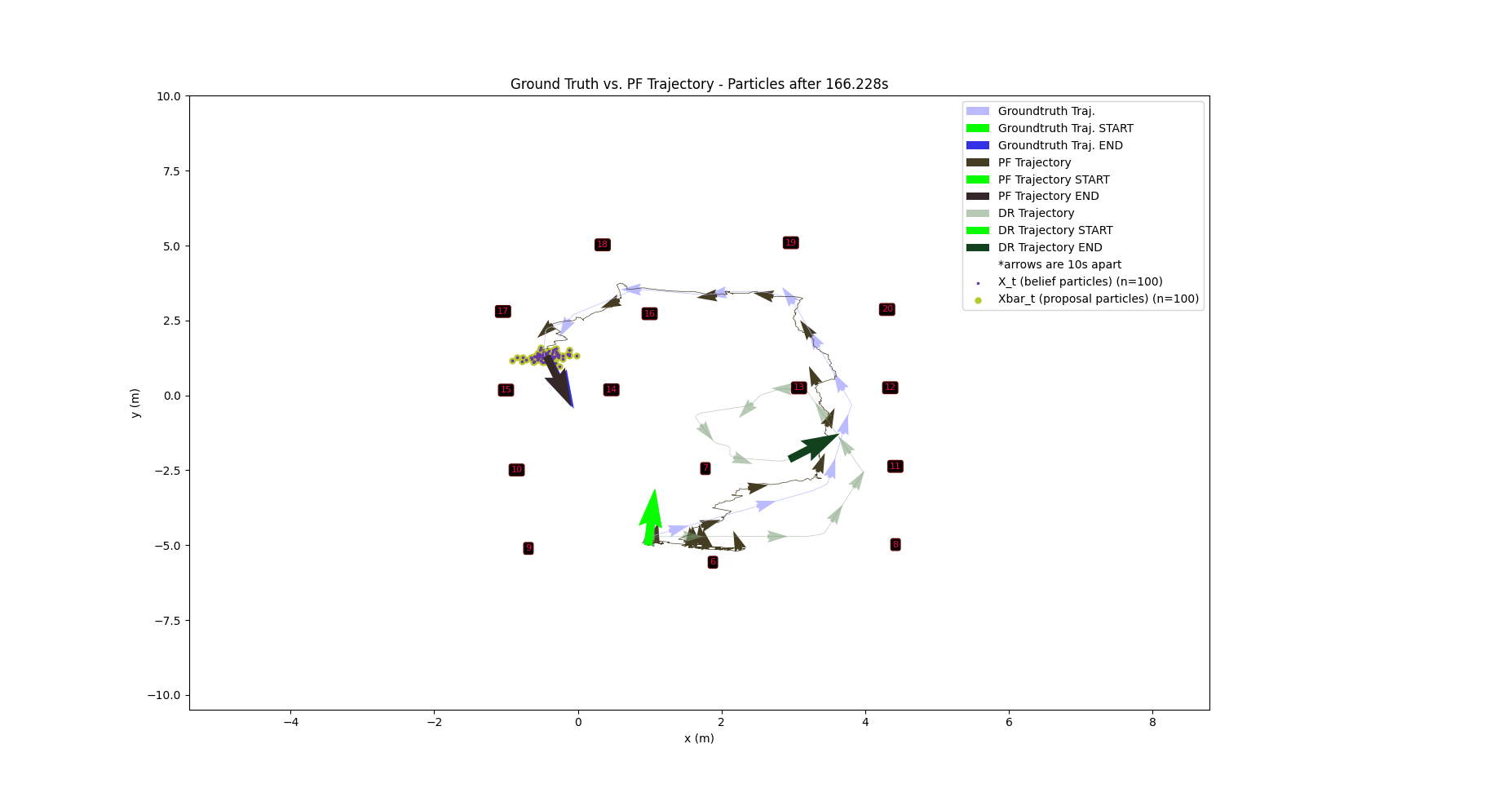

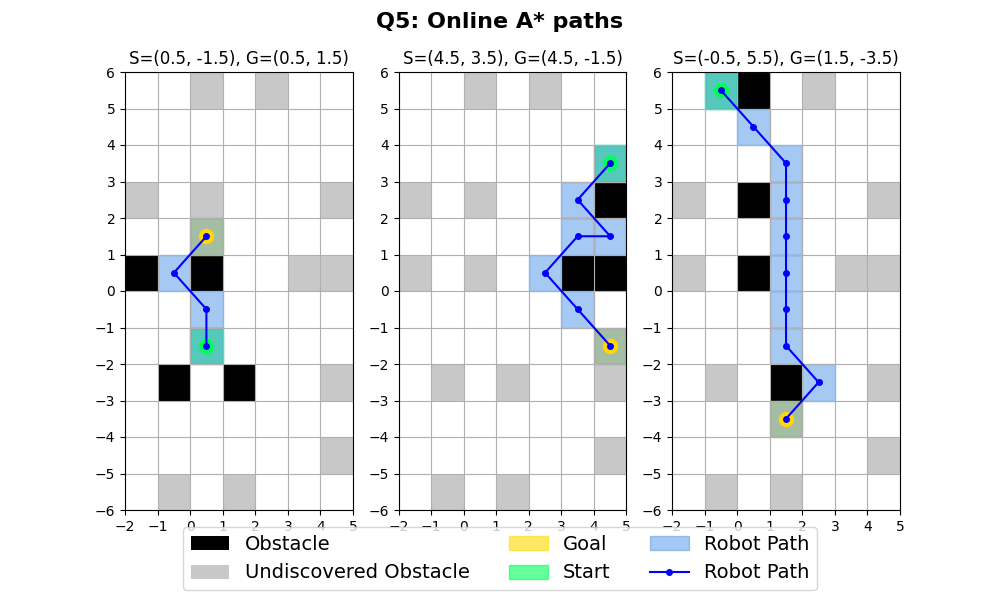

Implemented A* path planning with closed-loop waypoint control for a differential-drive robot, applied in simulation to an arena with obstacles sourced from the UTIAS Multi-Robot Cooperative Localization and Mapping Dataset (same as above).

This repo builds up to the full implementation described above via the following components:

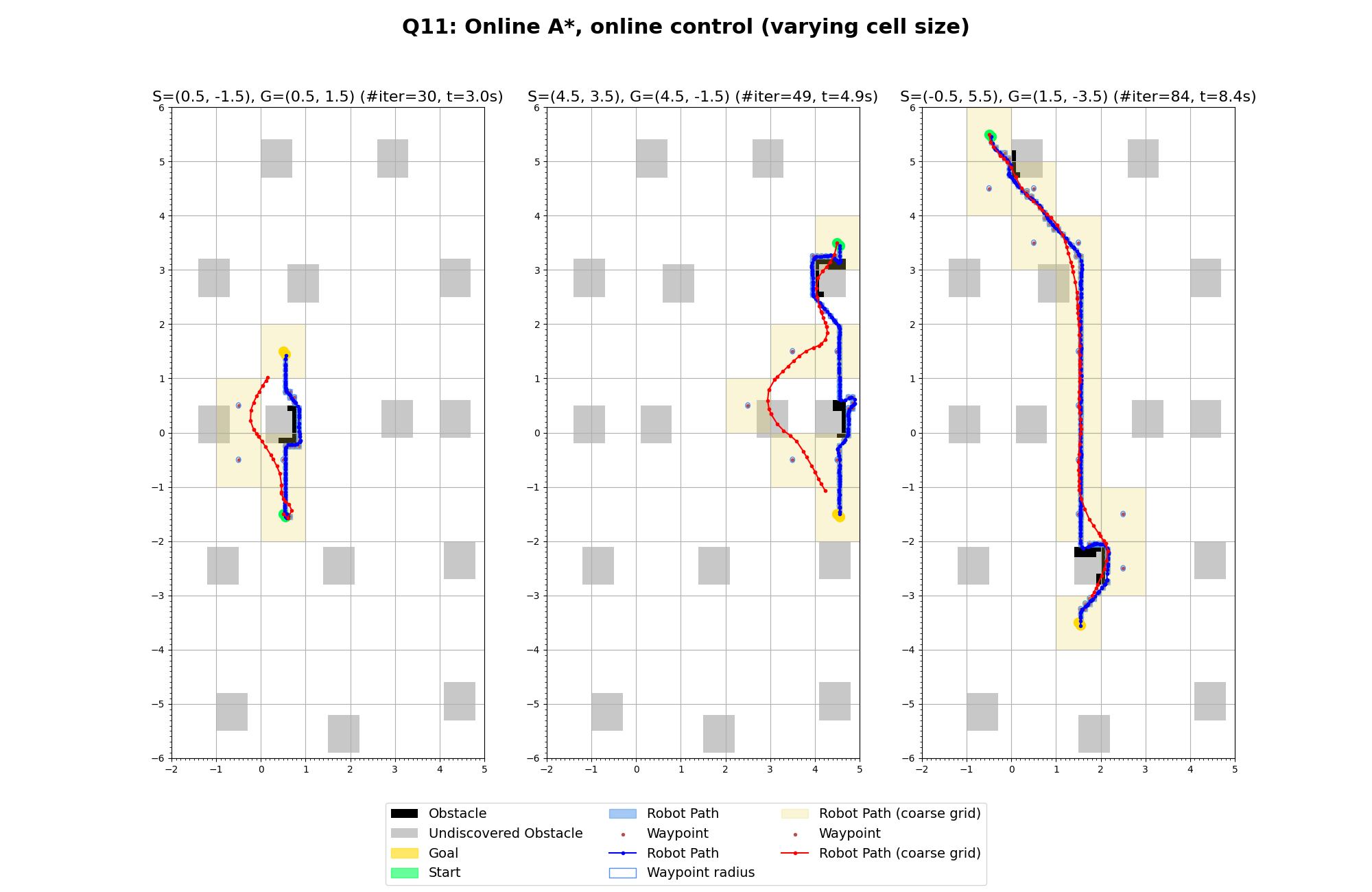

- Offline A* path planning on a fully-known obstacle grid.

- Online A* Wraps offline A* for path planning with incremental obstacle discovery (robot observes obstacles only when adjacent to them & recalculates path at each step).

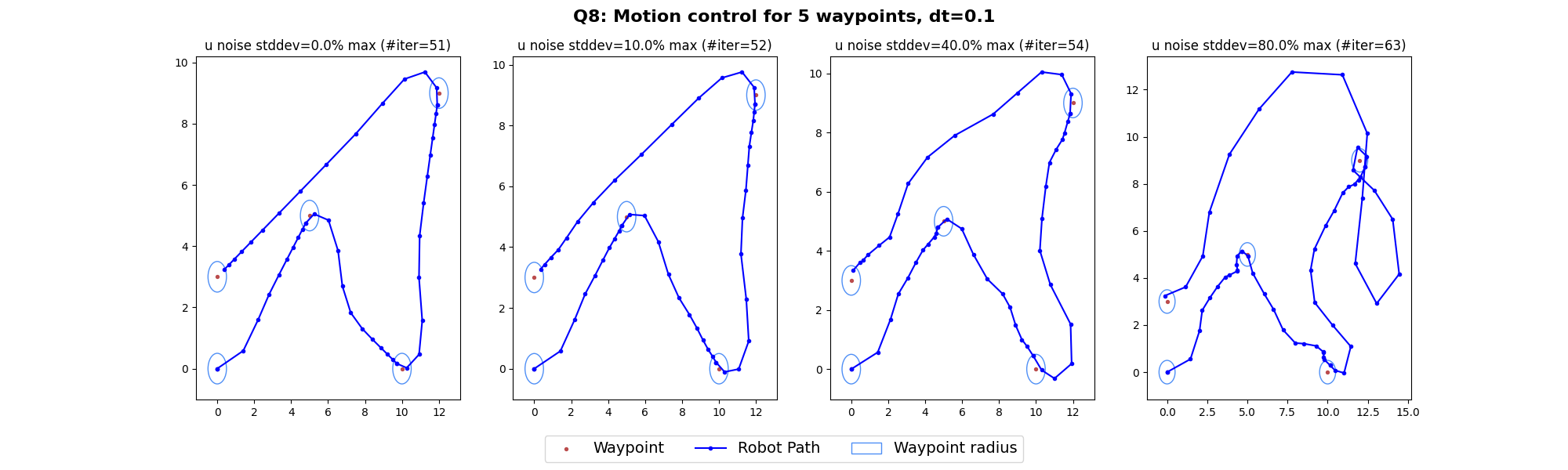

- A dual P-controller that drives the robot toward waypoints with configurable gains, biases, and acceleration limits

- A robot navigation simulator (RobotNavSim) that propagates control outputs through the motion model with Gaussian noise

Key Results: Offline A* found optimal paths with full map knowledge, while online A* successfully adapted as obstacles were revealed, sometimes taking detours when initially-planned routes became blocked. Padding the collision region of obstacles by 0.3m was essential for safe execution under noise, due to the controller cutting corners. The P-controller handled all test paths successfully at low-to-moderate noise levels, with simultaneous planning and driving handling control noise more gracefully than post-hoc control since deviations automatically triggered replanning.

Plots

Support Vector Machines (SVM) for Landmark Prediction

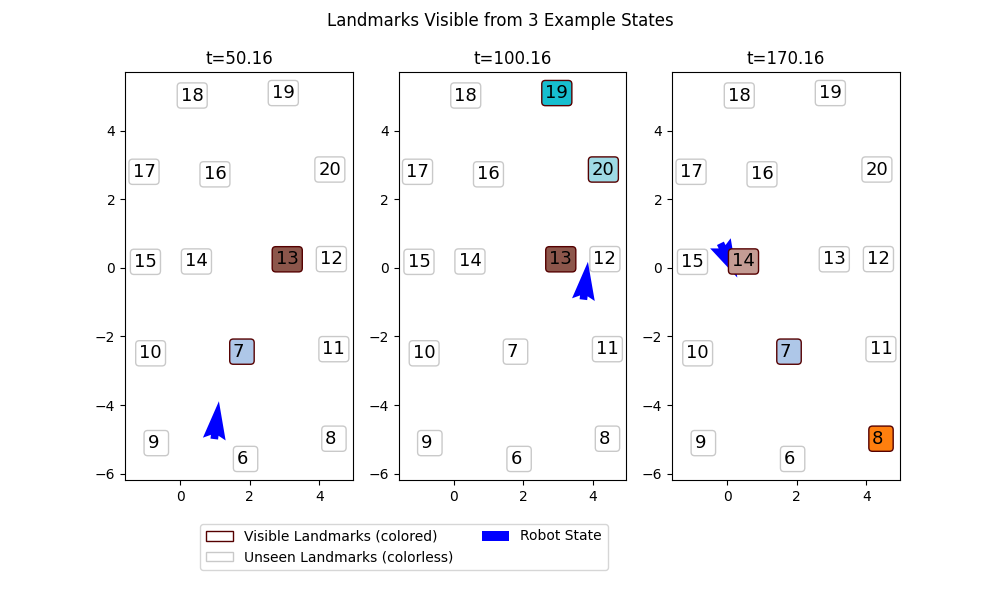

This repo implements a Support Vector Machine (SVM) classifier with an RBF kernel to predict landmark visibility for a mobile wheeled robot, given the robot’s current pose (x, y, θ). It is applied to real-world data from the UTIAS Multi-Robot Cooperative Localization and Mapping Dataset (same as above).

The SVM is implemented from scratch; scikit-learn is used only for evaluation utilities (confusion matrix display) and comparison experiments.

A full writeup including problem framing, algorithm derivation, and discussion of results is available in writeup.pdf.

The implementation includes:

- A from-scratch SVM classifier with RBF kernel, solving the quadratic programming problem via the

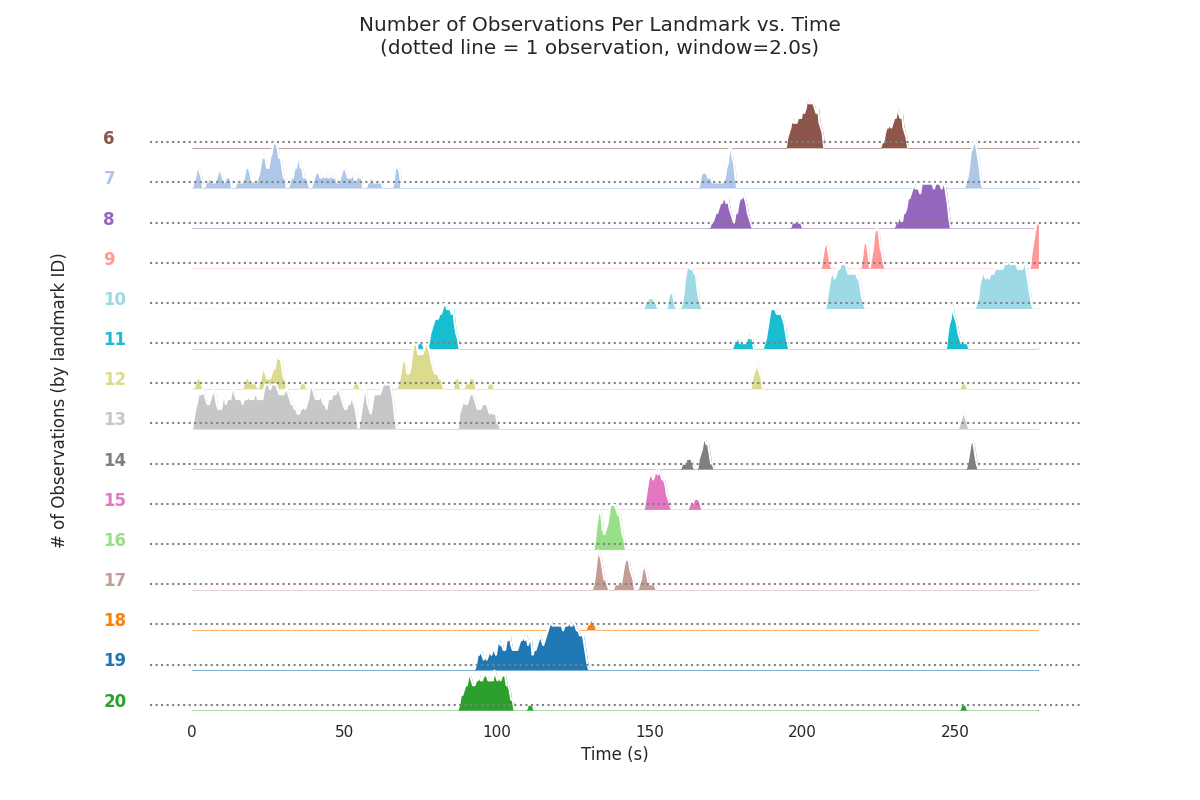

clarabelsolver (throughqpsolvers) - A dataset preprocessing pipeline that generates binary visibility labels from raw range measurements using a 2-second sliding window

- A multi-label classifier that trains one SVM per landmark (N=15 landmarks) and aggregates results

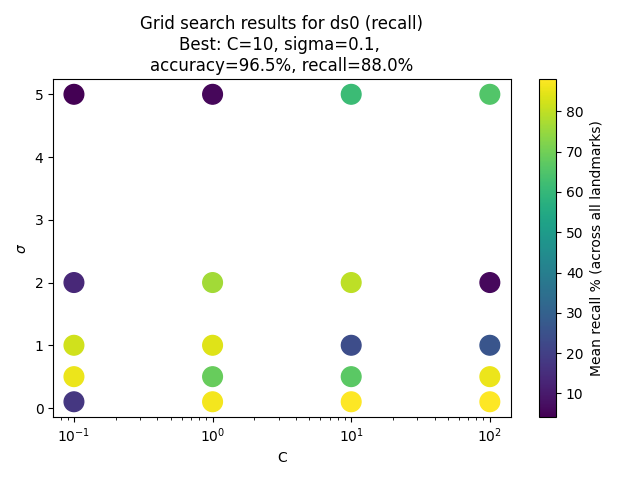

- A grid search over hyperparameters C and σ to select the best model

Key Results:

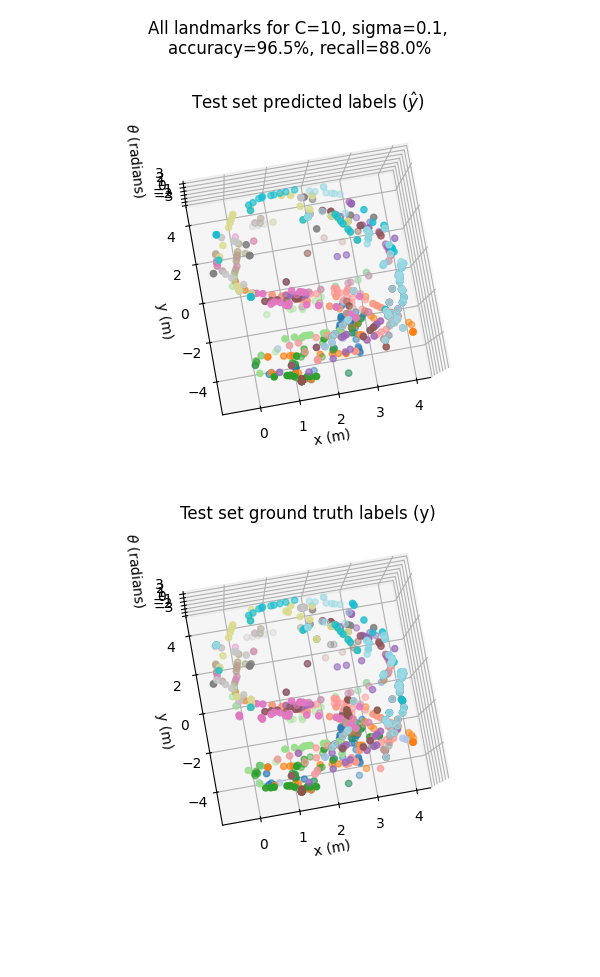

- The RBF kernel was selected after an initial qualitative exploration of kernel types, motivated by the observation that visibility regions in (x, y, θ) space are not linearly separable.

- Robot orientation is encoded as (sin θ, cos θ) rather than raw θ to avoid angle-wrapping discontinuities in the input space.

- Grid search over C ∈ {0.1, 1, 10, 100} and σ ∈ {0.1, 0.5, 1, 2, 5} identified (C=10, σ=0.1) as the best configuration, balancing accuracy and recall while limiting overfitting risk.

- The final classifier achieves 97% accuracy and 88% recall on a held-out 20% test set (randomly shuffled to avoid trajectory-ordering bias).

- Recall is the more informative metric here, since the class imbalance (most landmarks are invisible most of the time) means a trivial all-negative classifier would score deceptively high on accuracy alone.

Plots

Deep Neural Networks from Scratch

This repo contains various homework assignments for Northwestern’s MSAI 437 Deep Learning course.

Of particular relevance to the “from scratch” theme of this page is HW1, in which I implement a basic general-purpose MLP framework from scratch using numpy and matplotlib, taking the pytorch as inspiration for the API. I then apply it to learning a variety of toy classification problems on R2, achieving near-parity with a pytorch implementation with the same hyperparameter tuning for all 5 datasets.

After HW1, all assignments are implemented in pytorch, building up more complicated networks (autoencoder, diffusion model), so don’t fit into the topic of this page.